I’ve been having problems with high cpu usage on my interworx install. We’ve diagnosed it to possibly something is wrong with mysql. Is it possible to reinstall mysql on the interworx server without breaking everything?

Hi Mikie

I hope you don’t mind, but my thoughts are that it is not MySQL, but to answer your post

Please make full backups of all database(s)

SSH into server

(remove perconna if your using it)

cd /opt

wget http://updates.interworx.com/iworx-stable/SRPMS/mysql-iworx-5.1.47-7.iworx.src.rpm

(Please note - check the Current RPMS from IW first, to make sure you download the correct one for your distro - http://updates.interworx.com/)

Now you can either use --replacepkgs or rpm -e XXX.rpm (to remove rpm) and rpm -i XXX.rpm (to install rpm)

Install over same package

rpm -iv --replacepkgs <XXX.rpm>

Remove and Install

rpm -e XXX.rpm

rpm -i XXX.rpm

I hope that helps, but please ensure you have backups before doing anything, as I take no responcibility if the above crashes your system or otherwise.

Many thanks

John

I’m baffled with why this server is having so many problems with iw. When I log into IW the server crashes stops responding, it appears to be high mysql usage every time. There should be no reason why this configuration (3gb/2CPU’s) should be brought to it’s knees. When I contact IW support they say it’s not IW, but it is squarely pointing to an IW issue as the server is ok unless someone logs into IW.

Reinstall MySQL?

Hi Mikie

Many thanks, and to be honest, if it were to turn out to be an IW issue causing the high server usage, then yours would be the first.

If you have already opened a support ticket, and IW support have had a look, then advised it is not an IW issue, they will be telling you the truth, which is in line with my thoughts on your high usage post, which Steve Tozer also was inclined to agree with, and from memory was a php-cgi high loading, which was bringin MySQL loading as well, from user universve I think, which as a simple test, stop this user and see if loading stops (you may need to full yrestart services though to clear anything lingering)

However, that said, as we do not know your full setup, it’s more educated guess work from forums perspective, so I would never say never, but as I said, yours would be the first.

Also, if your now referring to IW MySQL, then please do not use my first post, as IW MySQL use their own version, which is different to the MySQL the siteworx users use

Many thanks

John

Hi mikei

Many thanks, but my eyesight is not what it used to be, so please could you post a readable picture, as from the little I can make out, I still can’t see how it’s IW sorry.

Many thanks

John

Hi mikei

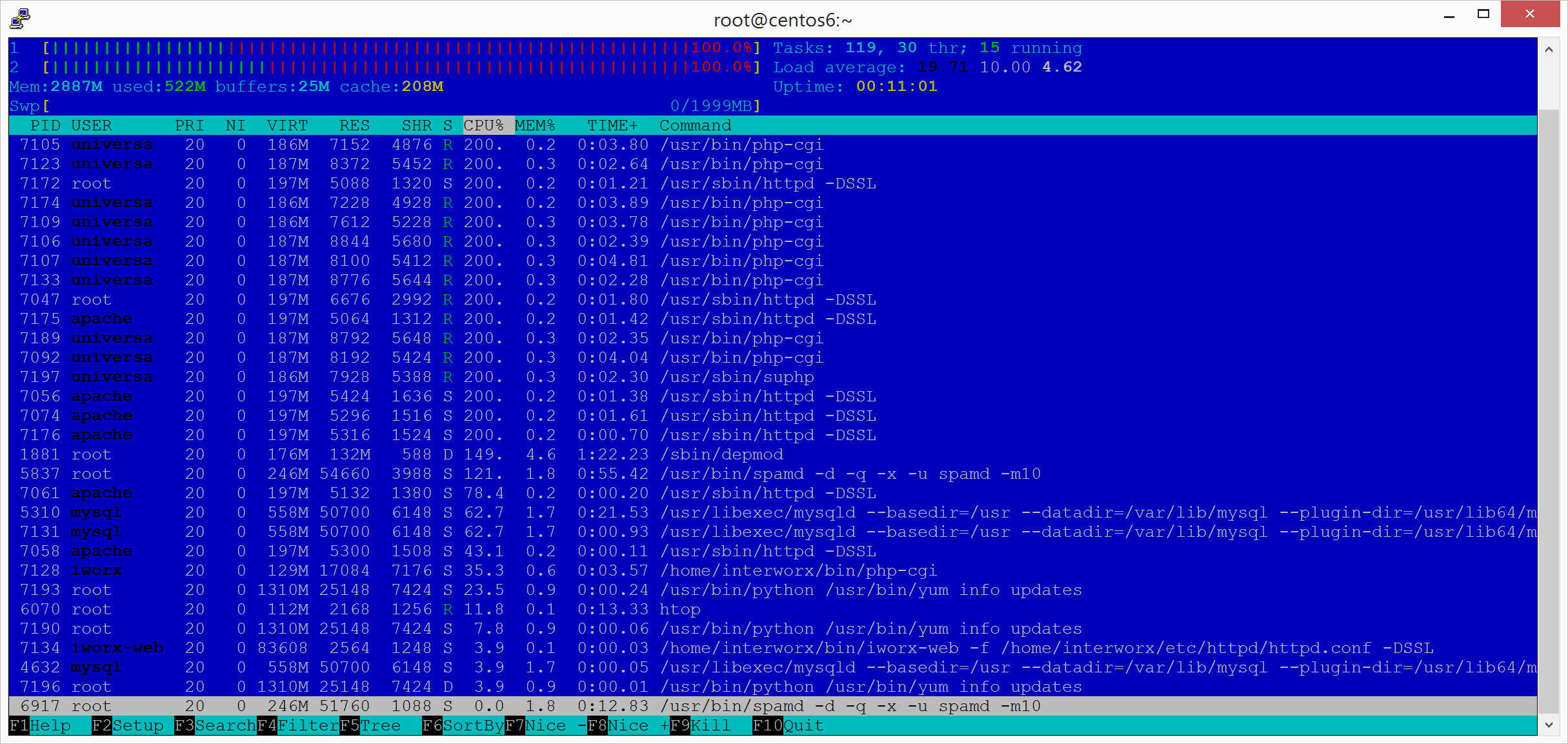

Many thanks, it’s slightly better thank you.

I’m sorry though, I am still not seeing how it relates to IW.

I could be entirely wrong, but IW runs under iWorx, and the culprit still looks to be universa for loading.

I’ll wait and see what other users think as I said I could be wrong.

Many thanks

John

Hello,

Looking at that screen shot, there does seem to be something running under the account universve as John pointed out. Have you tried optimizing MySQL at all using tuning-primer.sh, this may suggest to make some adjustments to /etc/my.cnf for example enabling query cache. I would look at going down this route to see if it helps.

Steve

Most interestingly it says my server was only running for 19 hours… I didn’t restart it.

I’ll re-run it over the weekend, but from this does anyone have suggestions on if and where I would change these?

-- MYSQL PERFORMANCE TUNING PRIMER --

- By: Matthew Montgomery -

MySQL Version 5.5.40-log x86_64

Uptime = 0 days 19 hrs 49 min 10 sec

Avg. qps = 13

Total Questions = 973193

Threads Connected = 1

Warning: Server has not been running for at least 48hrs.

It may not be safe to use these recommendations

To find out more information on how each of these

runtime variables effects performance visit:

http://dev.mysql.com/doc/refman/5.5/en/server-system-variables.html

Visit http://www.mysql.com/products/enterprise/advisors.html

for info about MySQL’s Enterprise Monitoring and Advisory Service

SLOW QUERIES

The slow query log is enabled.

Current long_query_time = 5.000000 sec.

You have 18 out of 973220 that take longer than 5.000000 sec. to complete

Your long_query_time seems to be fine

BINARY UPDATE LOG

The binary update log is NOT enabled.

You will not be able to do point in time recovery

See http://dev.mysql.com/doc/refman/5.5/en/point-in-time-recovery.html

WORKER THREADS

Current thread_cache_size = 0

Current threads_cached = 0

Current threads_per_sec = 3

Historic threads_per_sec = 0

Threads created per/sec are overrunning threads cached

You should raise thread_cache_size

MAX CONNECTIONS

Current max_connections = 151

Current threads_connected = 1

Historic max_used_connections = 28

The number of used connections is 18% of the configured maximum.

Your max_connections variable seems to be fine.

INNODB STATUS

Current InnoDB index space = 45 M

Current InnoDB data space = 106 M

Current InnoDB buffer pool free = 21 %

Current innodb_buffer_pool_size = 128 M

Depending on how much space your innodb indexes take up it may be safe

to increase this value to up to 2 / 3 of total system memory

MEMORY USAGE

Max Memory Ever Allocated : 229 M

Configured Max Per-thread Buffers : 415 M

Configured Max Global Buffers : 152 M

Configured Max Memory Limit : 567 M

Physical Memory : 2.81 G

Max memory limit seem to be within acceptable norms

KEY BUFFER

Current MyISAM index space = 105 M

Current key_buffer_size = 8 M

Key cache miss rate is 1 : 256

Key buffer free ratio = 81 %

Your key_buffer_size seems to be fine

QUERY CACHE

Query cache is supported but not enabled

Perhaps you should set the query_cache_size

SORT OPERATIONS

Current sort_buffer_size = 2 M

Current read_rnd_buffer_size = 256 K

Sort buffer seems to be fine

JOINS

Current join_buffer_size = 132.00 K

You have had 6 queries where a join could not use an index properly

You should enable “log-queries-not-using-indexes”

Then look for non indexed joins in the slow query log.

If you are unable to optimize your queries you may want to increase your

join_buffer_size to accommodate larger joins in one pass.

Note! This script will still suggest raising the join_buffer_size when

ANY joins not using indexes are found.

OPEN FILES LIMIT

Current open_files_limit = 1024 files

The open_files_limit should typically be set to at least 2x-3x

that of table_cache if you have heavy MyISAM usage.

Your open_files_limit value seems to be fine

TABLE CACHE

Current table_open_cache = 400 tables

Current table_definition_cache = 400 tables

You have a total of 1835 tables

You have 400 open tables.

Current table_cache hit rate is 6%

, while 100% of your table cache is in use

You should probably increase your table_cache

You should probably increase your table_definition_cache value.

TEMP TABLES

Current max_heap_table_size = 16 M

Current tmp_table_size = 16 M

Of 23802 temp tables, 35% were created on disk

Perhaps you should increase your tmp_table_size and/or max_heap_table_size

to reduce the number of disk-based temporary tables

Note! BLOB and TEXT columns are not allow in memory tables.

If you are using these columns raising these values might not impact your

ratio of on disk temp tables.

TABLE SCANS

Current read_buffer_size = 128 K

Current table scan ratio = 11 : 1

read_buffer_size seems to be fine

TABLE LOCKING

Current Lock Wait ratio = 1 : 13451

Your table locking seems to be fine

Hello,

To get a reflection on the settings to amend. The MySQL service needs to be running for 48 hours. Could you leave the service running and then re-run the script.

Steve

Rekindling this thread.

I tried to run the tool and found out that my MySQL keeps crashing. After further investigation IW support notified me that I potentially have several versions running. When I was having issues earlier my reseller installed the updated mysql and somehow it looks like they’re both competing to run at the same time.

mysql --version

mysql Ver 14.14 Distrib 5.5.40, for Linux (x86_64) using readline 5.1

[root@centos6 ~]# rpm -qa | grep mysql

mysql-libs-5.5.40-1.el6.remi.x86_64

mysql-devel-5.5.40-1.el6.remi.x86_64

compat-mysql51-5.1.54-1.el6.remi.x86_64

mysql-5.5.40-1.el6.remi.x86_64

mysql-server-5.5.40-1.el6.remi.x86_64

mysql-iworx-4.0.21-12.rhe6x.iworx.x86_64

php-mysql-5.4.33-2.el6.remi.x86_64

[root@centos6 ~]#

in addition my logs show

From the logs:

Version: '5.5.40-log' socket: '/var/lib/mysql/mysql.sock' port: 3306 MySQL Community Server (GPL) by Remi

InnoDB: Unable to lock ./ibdata1, error: 11

InnoDB: Check that you do not already have another mysqld process

It does look like either the server didn't stop properly, or possibly one of the other installations was utilizing the port.

When I went back to my reseller he tagged out saying he wasn’t going to take responsibility for installing the remi version (even though he did) and wouldn’t uninstall mysql for fear of breaking things. Well, unfortunately things are broken (but running) - anyone have an idea of how I would safely remove mysql without breaking everything?

also I failed to post this last time because I didn’t understand if it was relevant, but clearly the tool has found two socks open

[root@centos6 ~]# tuning-primer.sh

Using login values from ~/.my.cnf

- INITIAL LOGIN ATTEMPT FAILED -

Testing for stored webmin passwords:

None Found

Could not auto detect login info!

Found potential sockets: /home/interworx/var/run/mysql.sock

/var/lib/mysql/mysql.sock

Using: /var/lib/mysql/mysql.sock

Hello,

From that, it seems you only are running the Remi version which is up to date and the iworx version which is standard for interworx.

The error in the log, is mostly likely to be if mysql has crashed the pid would still be there, just removing the pid and then restarting mysql would work.

So what you’re saying is that it’s probably not IW MySQL that is the problem… and I’m back to square one trying to figure out what is crashing MySQL? I’m ok with ruling that out… but still trying to figure out what is crashing MySQL.

Without having direct access to your server its pretty hard to suggest anything else. Maybe worse now hiring a system admin to check over your server.